A Wide-area Monitoring Technique of National Route using Visual IoT System

1. Introduction

Traffic engineering is currently witnessing substantial advancements in real-time image processing systems designed for evaluating traffic volumes through machine learning techniques [1], [2]. These developments have facilitated numerous practical applications and services [3]. Traditional traffic monitoring approaches predominantly utilize overhead cameras above streets, which are subject to installation environment limitations [4].

Coastal roads, especially national highways, require continuous monitoring due to their critical roles in transportation. In Japan, citizens' daily lives such as transportation, commercial activities, and educations in many small towns depend heavily on coastal roads, making traffic congestions a significant disruptor of inter-municipal travels. While positioning cameras along the coastline promises wider coverage, long distances pose serious detection challenges, such as small pixel targets and obstacles that block vision. This environment requires high performance and functionality from the system. Moreover, a horizontal view of the road, as opposed to an overhead view, complicates vehicle detection using machine learning techniques.

This paper aims to overcome these issues by implementing a comprehensive system leveraging the DeepSort algorithm for continuous vehicle tracking [5]. After developing a processing algorithm and implementing a monitoring system design using advanced visual IoT (Internet of Things) technologies [6]-[9], the system processes real-time data from video footage captured along National Route 398 in Onagawa Town, Miyagi Prefecture. Our noteworthy improvements include the use of custom learning data tailored for horizontal line-of-sight imagery and sophisticated methods to mitigate image shaking.

The structure of the paper is outlined as follows: Section 2 reviews prior researches in traffic detection methodologies. Section 3 delves into the concept and implementation of visual IoT. Section 4 details the applied traffic detection methodology. Section 5 presents the results of traffic volume evaluation on National Route 398 in Japan. Section 6 concludes the paper with a summary of key insights and future implications.

2. Background

2.1 Related Studies

Numerous studies have previously explored vehicle detection from video sources. A straightforward approach known as Frame Difference [10] detects moving objects by analyzing changes between consecutive frames. Alternatively, the method developed by [11] computes an average frame to serve as background reference information. Optical flow-based techniques [12] further extend this by analyzing the flow vector characteristics of moving entities over time, expressing direction through motion vectors.

Feature-based methods such as Scale Invariant Feature Transformation (SIFT) [13] and Haar-Like Feature [14] leverage appearance features for detection. SIFT is noted for its robustness against rotational and scale variations, as demonstrated in applications like [15], while Haar-Like features capitalize on brightness contrasts, proving effective in urban vehicle monitoring scenarios [16].

The challenge lies in applying these research to reality. Notably, camera placement is critical; typically, cameras are often mounted directly overhead to provide comprehensive lane coverage, adding significant installation and wiring costs [11].

This paper presents a method that addresses these challenges by offering a practical and cost-effective solution to simplify camera system installations compared to the traditional setups. Conventional systems typically require stable and overhead positioning to mitigate issues like wind-induced shaking and obstructions. In contrast, our approach allows for more flexible side-view installations, which typically resulting in smaller and potentially blurred vehicle images. In coastal road side-view monitoring scenarios (Fig. 1), vehicles appear with reduced pixel size, complicating the application of machine learning algorithms such as the Yolo [17], which is optimized for higher resolution images.

To overcome these imaging drawbacks, we employ advanced image stabilization technology and augment the training datasets to improve small object detection performance. Furthermore, the integration of DeepSort facilitates continuous vehicle tracking, even amid temporary visual obstructions. Consequently, our approach achieves detection outcomes comparable to conventional technologies, accompanied by lower costs and complexity.

2.2 Visual IoT Technology and Its Reusability

Visual IoT, as introduced by Iyer and Ozer in 2016, extends the traditional sensor IoT frameworks by incorporating economical video transmission systems using video or image sensors [6]. This framework employs cameras as sensors connected to the Internet.

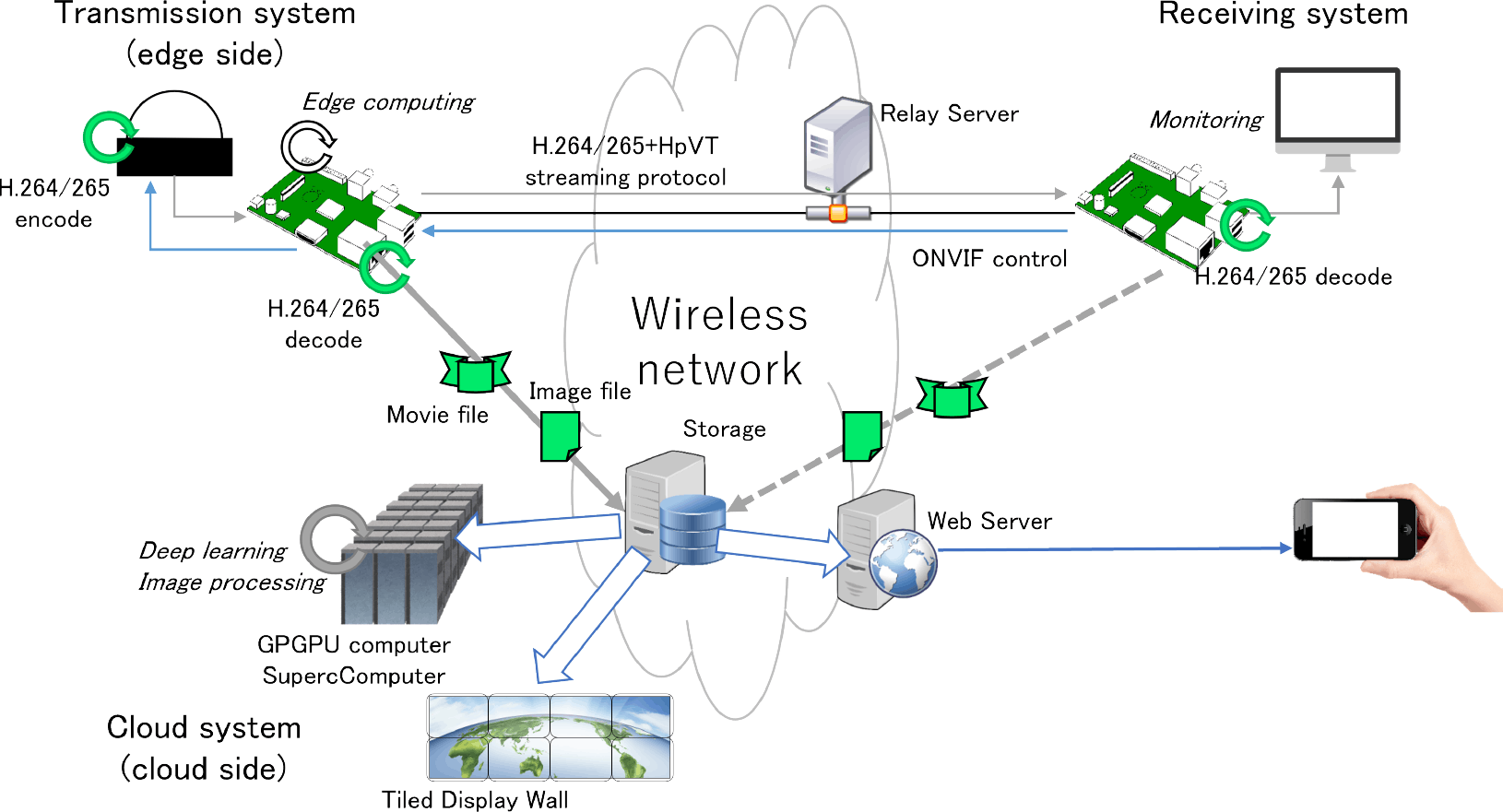

Our system illustrated in Fig. 2 utilizes a commercial IP network camera rather than developing proprietary hardware. This approach offers several advantages, including cost-effectiveness and high image quality, providing an attractive alternative for budget-conscious implementations. It also allows significant reusability, as pre-existing camera setups and network infrastructures can be easily repurposed or augmented with minimal financial and logistical overhead. Furthermore, these commercial devices support Real-Time Streaming Protocol (RTSP), optimizing both economic viability and video fidelity. Visual IoT technology has already been effectively employed by the authors in applications such as urban smoke detection [18] and monitoring volcanic plumes [19], demonstrating its reliability under various environmental conditions.

Overall, the flexibility and scalability of visual IoT make it a valuable tool in diverse contexts, ranging from urban safety measures to comprehensive environmental surveillance projects.

3. Traffic Detection Method

3.1 Target City

Onagawa Town, located in Miyagi Prefecture, is a small city in the Tohoku region spanning 65 km2 with an approximate population of 6,000 residents. The town suffered from considerable damage during the Great East Japan Earthquake on March 11, 2011. Positioned as a ria-style coastal city, Onagawa has the Pacific Ocean to the east and is surrounded by mountains and bays to the north, west, and south.

The primary road within Onagawa Town is National Route 398, which runs parallel to the JR (Japan Railway) Ishinomaki Line (Fig. 1). This road serves as the sole main route connecting Onagawa to the neighboring large city of Ishinomaki. Any closure of this road, due to heavy rain or other factors, often severely impact local accessibility and transportation. Many towns across Japan face similar connectivity challenges; hence, effective monitoring and rapid response systems are crucial for ensuring safety and maintaining essential communication routes.

3.2 Dataset and Learning

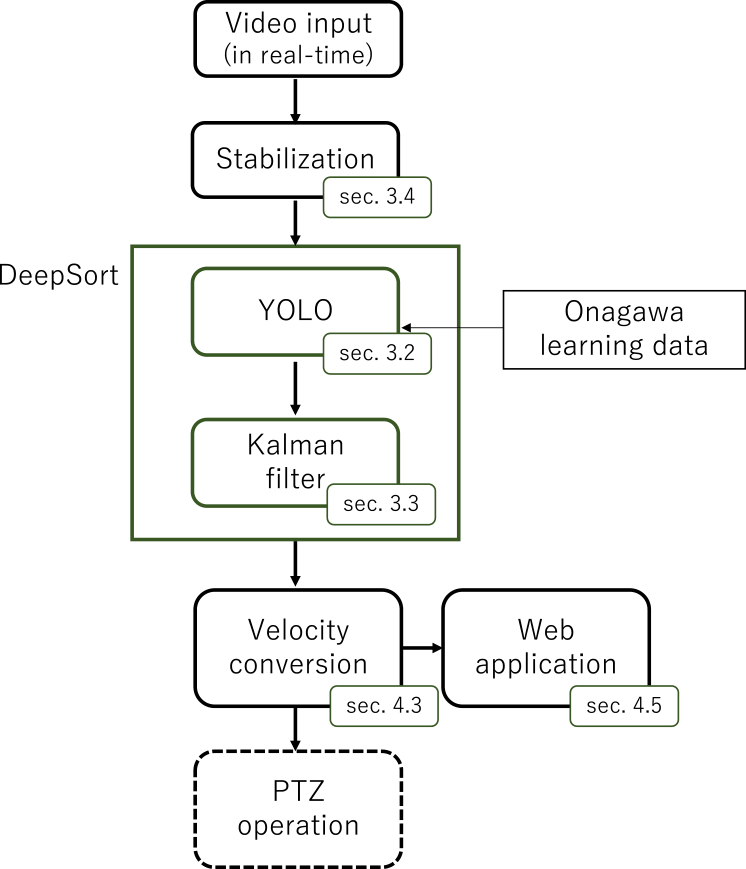

The flowchart of our proposed system's algorithm is depicted in Fig. 3. Initially, the algorithm receives video data, followed by image stabilization [20], as will be discussed in Section 3.4, to enhance visual clarity. The DeepSort algorithm, an fusion of YOLO object detection and Kalman filtering techniques, is employed to accurately detect and track vehicles within the video frames. To bolster the detection capabilities, we tailored our Onagawa learning dataset to fine-tune the YOLO model, ensuring superior performance in identifying vehicular targets. The extracted track data is then converted into traffic volume information, offering a user-friendly format for town residents through an intuitive web application.

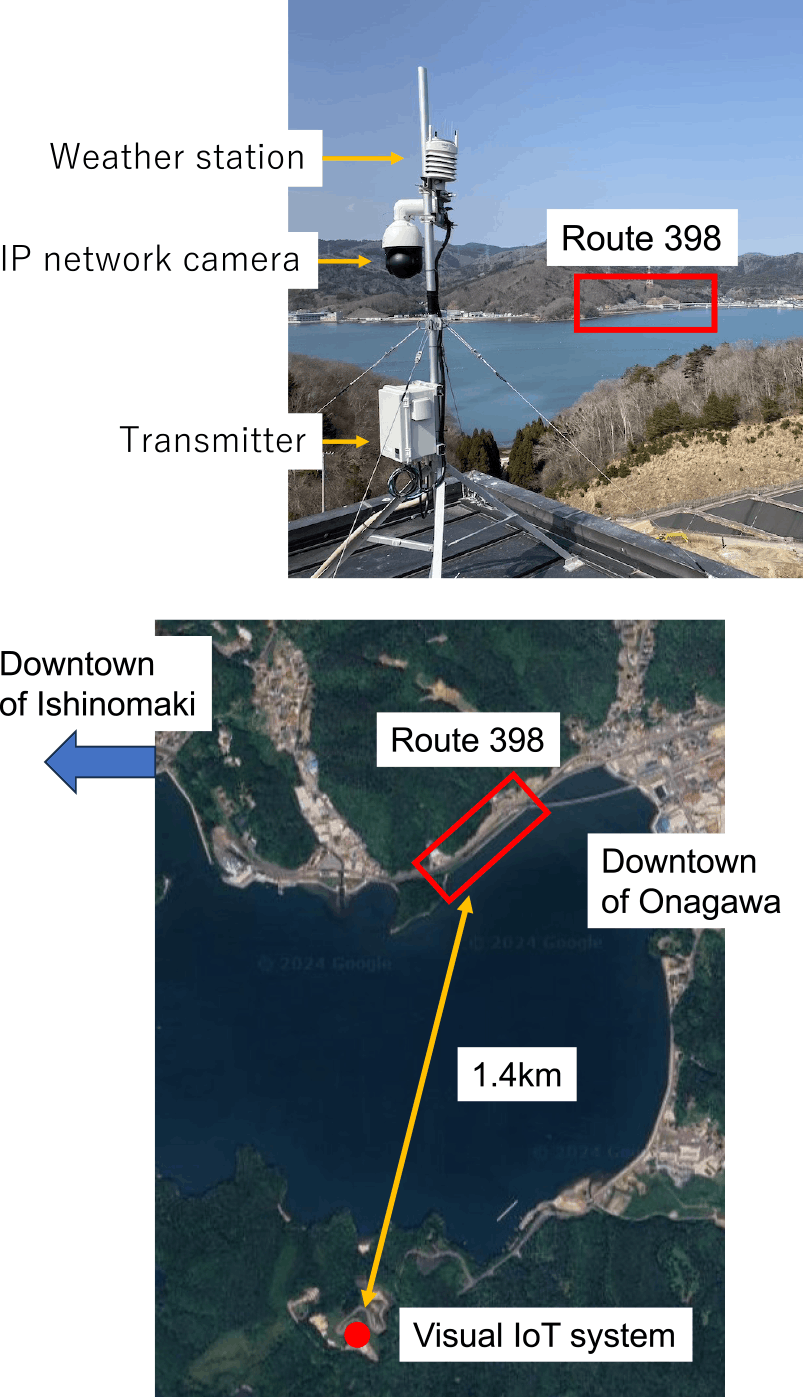

We installed this system on the rooftop of Onagawa city clean center building overlooking National Route 398 (as shown in Fig. 4) at approximately 1.4 kilometers distance from the road. We applied the proposed method to frame images from the monitoring video of National Route 398, as shown in Fig. 1. The monitoring camera, HIKVISION DS-2DE4425IW-DE, delivers an image resolution of 1920×1080 pixels and 25 fps, ensuring high-quality video capture.

Due to the small size of vehicles in the images (around 100×50 pixels), achieving high accuracy with a pre-trained YOLOv4 COCO model is challenging. As noted by Kisantal et al. [17], small objects often suffer from insufficient representation in training datasets, leading to subpar detection accuracy.

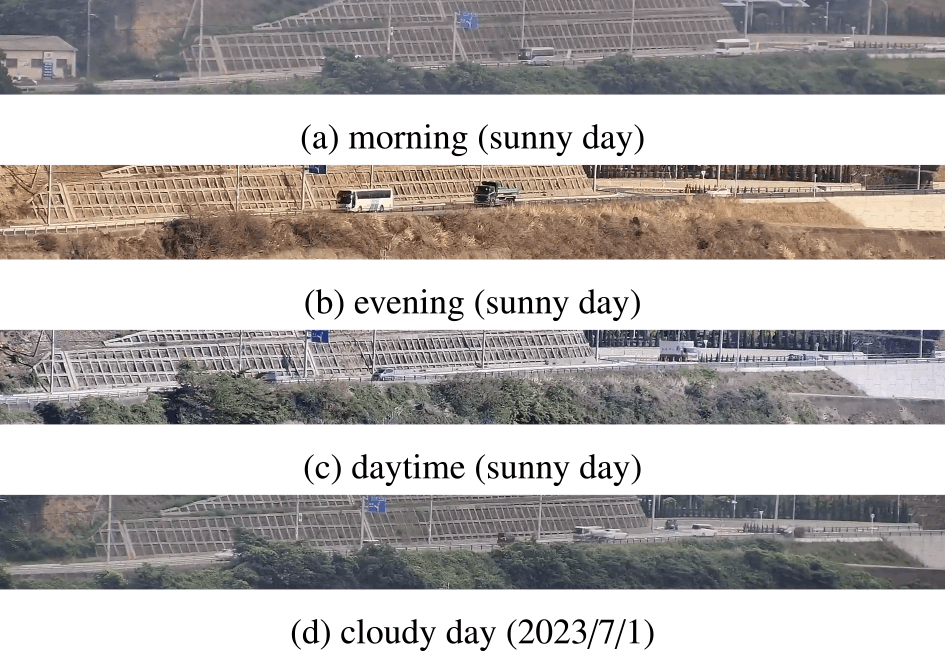

To improve detection performance, we curated a specialized dataset from our Onagawa monitoring videos, comprising 1920×192 pixel road images captured under diverse weather conditions and at various times of the day, as demonstrated in Fig. 5. The training data spans August 29, September 1, November 4, and November 8, 2021.

This diverse dataset improves model robustness and detection accuracy. A total of 3,400 daytime vehicle images are annotated across four classes: passenger cars, trucks, buses, and motorcycles. These annotations are formatted in Pascal VOC, ensuring compatibility with the YOLO framework. The YOLOv4 model has been trained over 100 iterations, with a learning rate of 0.001, batch size of 16, and momentum of 0.937 carefully tuned for optimal performance.

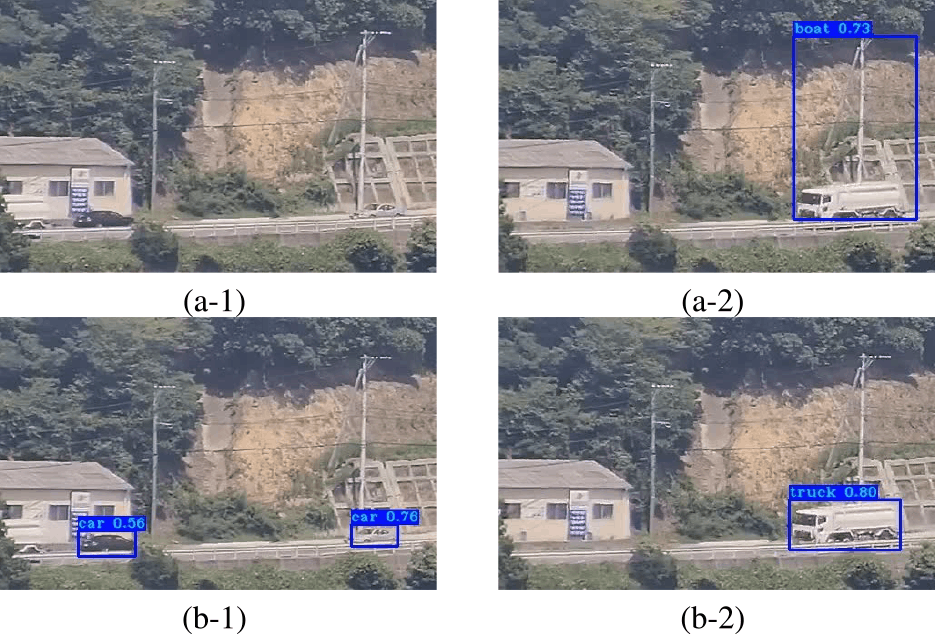

Comparisons in Fig. 6 highlights the accuracy of our approach. In contrast to the original model's failures to detect two vechicles (Fig. 6(a)), our fine-tuned model successfully identifies them (Fig. 6(c)). Additionally, while the previous model misclassifies a bus as a yacht in Fig. 6(b), our model accurately categorizes it (Fig. 6(d)).

3.3 Tracking

In the proposed method, the camera captures a road from a side angle, offering cost benefits compared to overhead installations. However, this perspective can introduce obstructions from vegetation, guardrail or other vehicles on the road [21]. Our system utilizes Kalman filtering to overcome these challenges, maintaining trackability despite such interruptions.

Multiple object tracking (MOT) studies have explored the utilization of intersection-over-union (IOU) trackers [22] that focus on minimal gap detections across frames. These trackers prioritize vehicles based on the highest IOU value relative to prior detections, associating detections with tracks in a greedy manner. For instance, the Simple Online Realtime Tracker (SORT) [23] merges Kalman filter motion models with the Hungarian algorithm to optimize detection assignment. SORT's extension integrates appearance information via deep association metrics to navigate prolonged occlusion periods. DeepSORT further enhances SORT by integrating convolutional neural networks (CNN) to amalgamate object appearance features into state prediction and data association processes [5].

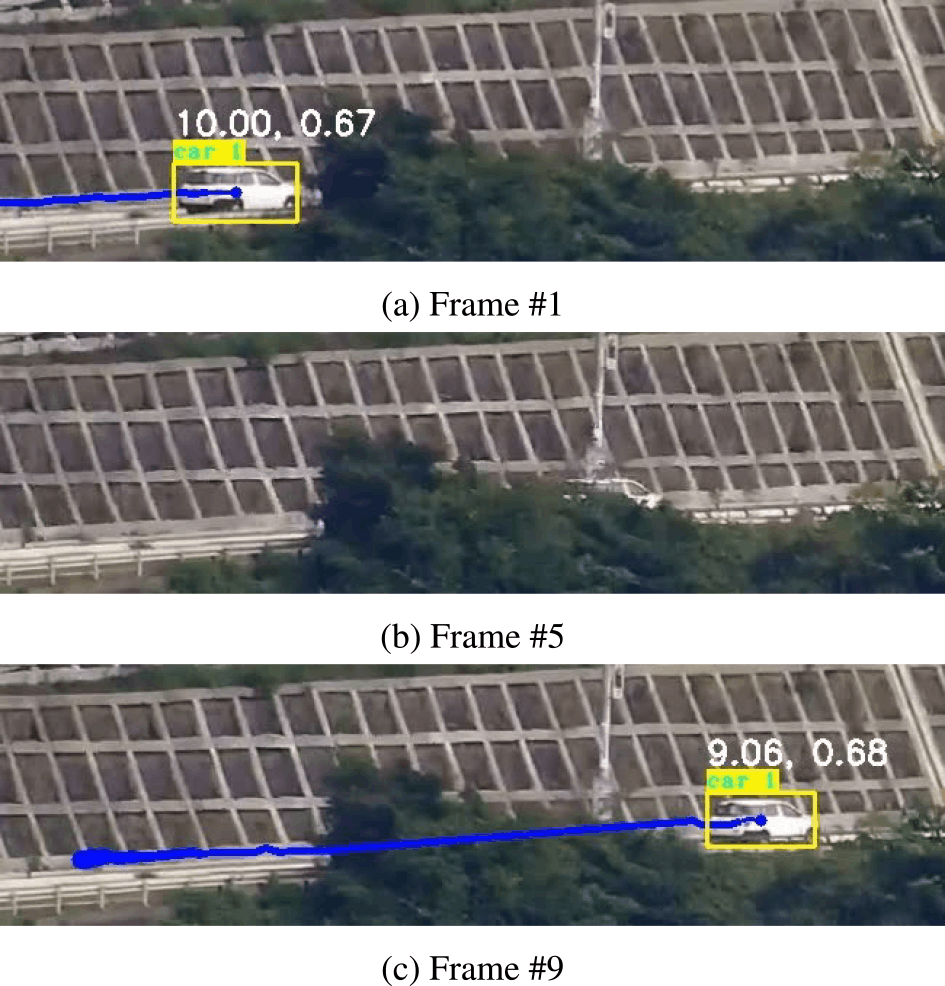

Figure 7 illustrates successful vehicle tracking results with our proposed method. Images at varying frame intervals are illustrated with yellow-detected areas and blue-tracked trajectories. Despite blockages like foreground vegetation, effective tracking ensures consistent identification of the same vehicle (labeled as “car 1”) throughout frames. This prevents misinterpretations of same vehicles as separate entities, facilitating precise traffic volume measurements. Note that these results are derived based on the stabilization techniques described in Sec. 3.4.

3.4 Image Stabilization

Image stabilization is crucial for accurate tracking and speed detection, especially for outdoor cameras prone to wind-induced sway [24]. Commonly categorized into mechanical, optical, and digital methods, video stabilization techniques aim to compensate camera shake. We employ a digital correction technique independent of hardware configurations, ensuring adaptability across diverse cameras. This stabilization process leverages AKAZE features [25] for feature matching between frames, followed by image deformation based on these matched segment, as proposed by Murakami et al. [20].

Figure 8 illustrates the stabilization process flow. The method begins by fixing an initial frame as a reference. Subsequently, the matching index Mi between the reference and subsequent frames is calculated. If |Mi-1|<Mthreshold, the subsequent frame is stabilized and replaces the original, ensuring similar content across frames. This process iterates through each frame, skipping matching if the index exceeds the threshold.

![Flow of stabilization technique [20].](TR0702-05/image/7-2-5-8.png)

The consequences of omitting digital stabilization are evident in Fig. 9, where pre-stabilization frames exhibit significant fluctuations in vehicle position from frame to frame, rendering traditional tracking methods ineffective. This can result in vehicles being counted multiple times, thereby compromising tracking accuracy. Conversely, post-stabilization frames demonstrate consistent tracking of a singular vehicle (Fig. 9), highlighting the importance of stabilization for accurate results. Given the average vehicle size spans about 30 pixels, mere a 10-pixel blur can significantly disrupt tracking accuracy.

![Detection results with (lower column) and without (upper column) stabilization technique [20].](TR0702-05/image/7-2-5-9.png)

4. Traffic Evaluation

To assess the efficacy of our visual IoT system for wide-area traffic monitoring, we focus on key performance metrics and their implications. This section discusses our goals linked to detecting traffic patterns and conditions, emphasizing practical applications in traffic management.

4.1 Evaluation Criteria

Recall and precision are fundamental metrics for assessing the detection method's efficacy, with recall capturing detection oversight and precision quantifying prediction accuracy. Recall is the ratio of accurately predicted positive samples to the total actual positive samples and precision measures the ratio of correctly predicted positive samples to the total predicted positive samples. These metrics are expressed as:\[Recall = \frac{{{\rm{TP}}}}{{{\rm{TP + FN}}}},\quad Precision = \frac{{{\rm{TP}}}}{{{\rm{TP + FP}}}}\](1)

where TP stands for true positives, FN for false negatives, and FP for false positives.

A trade-off exists between precision and recall, often consolidated into the F-score for holistic assessment. The Balanced F-score (F1 score), representing the harmonic mean of precision and recall, is defined as:\[{F_1} = \frac{{2 \times Precision \times Recall}}{{Precision + Recall}}\](2)

4.2 Results Analysis and Key Observations

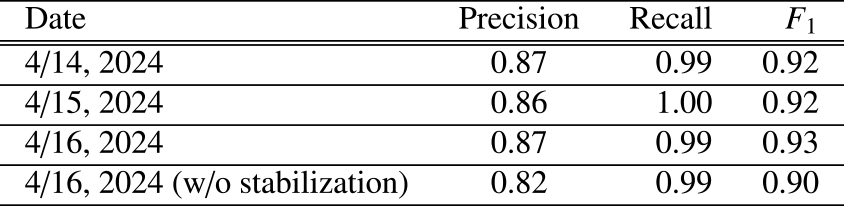

We applied the method to a dataset of 5-second video clips captured at 25 fps in Onagawa town on April 14 to 16, 2024. Comparison with manual counts revealed detection rates exceeding 90% in F1 scores, as detailed in Table 1.

On August 16, strong winds caused significant camera shake. We compared detection results with and without image stabilization. Without stabilization, the same vehicle was often detected as multiple distinct vehicles across consecutive frames, leading to reduced precision. These findings underscore the importance of stabilization in maintaining detection accuracy under challenging conditions.

Overall, the results demonstrate the system's reliability and robustness in real-world environments.

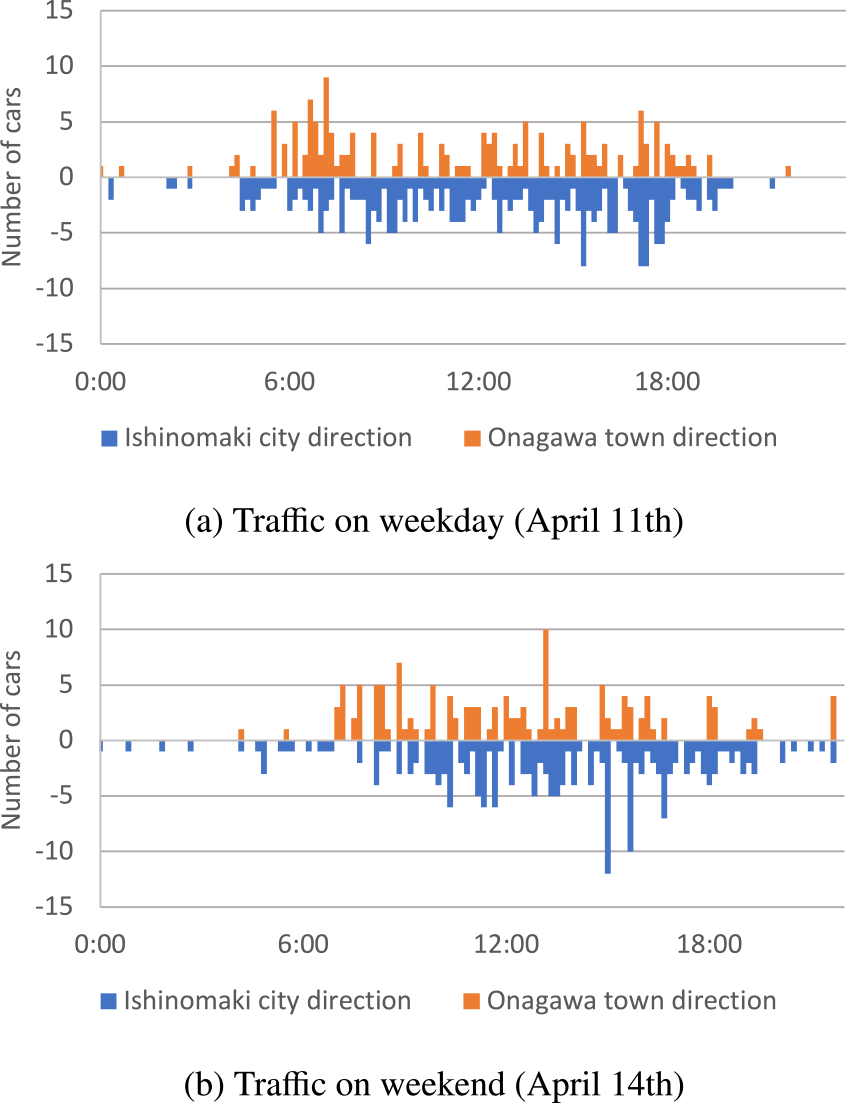

4.3 Daily Changes in Traffic Patterns

Figure 10 reveals differences in traffic patterns between weekdays and weekends, highlighting variations in traffic volume over time. In the graphs, the horizontal axis represents time, while the vertical axis depicts car counts. Positive and negative signs on the axis indicate vehicle directions. The graph plots average traffic volumes for weekdays and weekends on sunny days in April 2024 with 144 data points. Analysis of the data from April, 2024 reveals significant variances, particularly heightened weekday commuter traffic compared to weekend trends. On weekdays, morning and evening rush hours are prominent, with morning traffic (6 a.m. to 8 a.m.) directed towards Onagawa town's commercial districts housing numerous workplaces and evening traffic (6 p.m. to 8 p.m.) oriented towards Ishinomaki City's residential areas. Conversely, traffic on weekends is extremely light compared to weekdays until around 8 p.m.

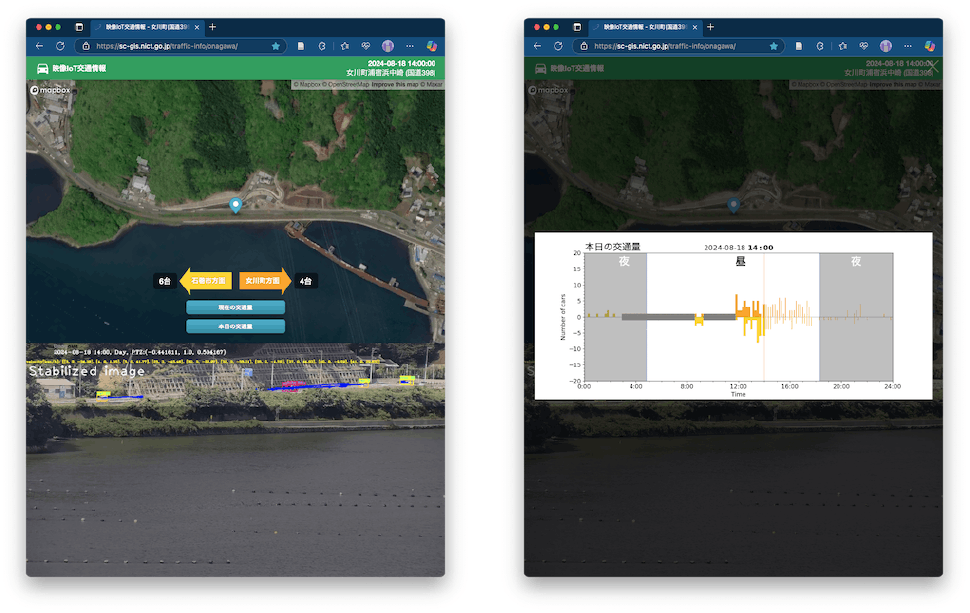

4.4 Web Application Integration

We have developed a web application to visualize the traffic data. As shown in Fig. 11, this application seamlessly integrates camera feeds, map images, and vehicle detections. This design provides users with an intuitive understanding of the traffic scene, allowing them to quickly identify vehicle locations and directions. The web application also features a button that, when tapped, will display a graph of current and day's traffic volume. The graph provides a perspective on the traffic patterns, showing how the number of vehicles varies over time. It can also be used to detect traffic jams and traffic stoppages caused by landslides.

5. Discussion

The demonstrated robustness of our proposed detection method over an extended period shows its potential for practical applications. Single-frame detection is insufficient for identifying vehicles obscured by obstacles; therefore, multi-frame tracking is employed to achieve a more accurate vehicle count. In this application, image stabilization improved tracking accuracy and reduced false positives, instances where the same vehicle was counted multiple times.

Future enhancements will focus on edge processing capabilities, aiming to optimize transmission requirements and enhance privacy protection by performing detections at edge devices. Leveraging the wide-angle footage, we also plan to investigate its potential in anomaly detection. Tracking vehicles over long distances allows us to obtain velocity information and identify traffic patterns and bottlenecks that are not visible from localized observations. Additionally, we envision integrating pan-tilt-zoom (PTZ) camera functionality to enable automatic detection and enhance real-time data collection. This approach allows a single camera to monitor a broad area of roads, an advantage not achievable with overhead camera installations. Such integration will expand the applicability of visual IoT systems, making them valuable tools for detailed traffic analysis and responsive transportation management.

6. Conclusion

By leveraging visual IoT systems and innovative real-time image processing techniques, we have proposed methods to enhance traffic monitoring efficiency and accuracy. Through the integration of DeepSort technology and the evaluation of key parameters such as traffic volume, vehicle speed, and image quality improvements, our approach offers a comprehensive solution for assessing and managing traffic flow on coastal arterial routes.

The successful application of these technologies in monitoring National Highway 398 in Onagawa city, Miyagi Prefecture, showcases the potential effectiveness in enhancing traffic management strategies and ensuring smoother transportation operations in coastal regions. Further research and implementation of these techniques hold promise for improving traffic monitoring systems and infrastructure planning along vital coastal road networks.

Acknowledgment A part of this work was supported by AMATERASS system operated by amaterass.org, Japan High Performance Computing and Networking plus Large-scale Data Analyzing and Information Systems (JHPCN) (Project ID: jh240077) and Cross-ministerial Strategic Innovation Promotion Program (SIP) Phase 3 project “Development of a Resilient Smart Network System against Natural Disasters.” We thank Onagawa city for their support. The data processing is carried out on the STARS (SpatioTemporal data Analytic and Reciprocal Synchronization)-gis system.

References

- [1] Sivaraman, S. and Trivedi, M. M.: Looking at Vehicles on the Road: A Survey of Vision-Based Vehicle Detection, Tracking, and Behavior Analysis, IEEE Transactions on Intelligent Transportation Systems, Vol.14, No.4, pp.1773–1795 (online), DOI: 10.1109/TITS.2013.2266661 (2013).

- [2] Al-qaness, M. A. A., Abbasi, A. A., Fan, H., Ibrahim, R. A., Alsamhi, S. H. and Hawbani, A.: An improved YOLO-based road traffic monitoring system, Computing, Vol.103, No.2, pp.211–230 (online), DOI: 10.1007/s00607-020-00869-8 (2021).

- [3] Song, H., Liang, H., Li, H., Dai, Z. and Yun, X.: Vision-based vehicle detection and counting system using deep learning in highway scenes, European Transport Research Review, Vol.11, No.1, p.51 (online), DOI: 10.1186/s12544-019-0390-4 (2019).

- [4] Kastrinaki, V., Zervakis, M. and Kalaitzakis, K.: A survey of video processing techniques for traffic applications, Image and Vision Computing, Vol.21, No.4, pp.359–381 (online), DOI: https://doi.org/10.1016/S0262-8856(03)00004-0 (2003).

- [5] Wojke, N., Bewley, A. and Paulus, D.: Simple online and realtime tracking with a deep association metric, 2017 IEEE International Conference on Image Processing (ICIP), pp.3645–3649 (online), DOI: 10.1109/ICIP.2017.8296962 (2017).

- [6] Iyer, R. and Ozer, E.: Visual IoT: Architectural Challenges and Opportunities; Toward a Self-Learning and Energy-Neutral IoT, IEEE Micro, Vol.36, No.06, pp.45–49 (online), DOI: 10.1109/MM.2016.96 (2016).

- [7] Ji, W., Xu, J., Qiao, H., Zhou, M. and Liang, B.: Visual IoT: Enabling Internet of Things Visualization in Smart Cities, IEEE Network, Vol.33, No.2, pp.102–110 (online), DOI: 10.1109/MNET.2019.1800258 (2019).

- [8] Murata, K. T., Mizuhara, T., Pavarangkoon, P., Yamamoto, K., Muranaga, K. and Aoki, T.: Design and Development of Real-Time Video Transmission System Using Visual IoT Device, Multi-disciplinary Trends in Artificial Intelligence (Kaenampornpan, M., Malaka, R., Nguyen, D. D. and Schwind, N., eds.), Cham, Springer International Publishing, pp.263–269 (online), DOI: 10.1155/2014/597368 (2018).

- [9] Hazra, A.: Promising Role of Visual IoT: Challenges and Future Research Directions, IEEE Engineering Management Review, Vol.51, No.4, pp.169–178 (online), DOI: 10.1109/EMR.2023.3304121 (2023).

- [10] Nguyen, P.-V. and Le, H.-B.: A Multi-modal Particle Filter Based Motorcycle Tracking System, PRICAI 2008: Trends in Artificial Intelligence (Ho, T.-B. and Zhou, Z.-H., eds.), Berlin, Heidelberg, Springer Berlin Heidelberg, pp.819–828 (2008).

- [11] Kanhere, N. K. and Birchfield, S. T.: Real-Time Incremental Segmentation and Tracking of Vehicles at Low Camera Angles Using Stable Features, IEEE Transactions on Intelligent Transportation Systems, Vol.9, No.1, pp.148–160 (online), DOI: 10.1109/TITS.2007.911357 (2008).

- [12] Sreedevi, I., Gupta, M. and Bhattacharyya, P.: Vehicle Tracking and Speed Estimation using Optical Flow Method, International Journal of Engineering Science and Technology, Vol.3 (2011).

- [13] Lowe, D.: Object recognition from local scale-invariant features, Proceedings of the Seventh IEEE International Conference on Computer Vision, Vol.2, pp.1150–1157 vol.2 (online), DOI: 10.1109/ICCV.1999.790410 (1999).

- [14] Viola, P. and Jones, M.: Rapid object detection using a boosted cascade of simple features, Proceedings of the 2001 IEEE Computer Society Conference on Computer Vision and Pattern Recognition. CVPR 2001, Vol.1, pp.I–I (online), DOI: 10.1109/CVPR.2001.990517 (2001).

- [15] Gao, T., Liu, Z.-g., Gao, W.-c. and Zhang, J.: Moving Vehicle Tracking Based on SIFT Active Particle Choosing, Advances in Neuro-Information Processing (Köppen, M., Kasabov, N. and Coghill, G., eds.), Berlin, Heidelberg, Springer Berlin Heidelberg, pp.695–702 (2009).

- [16] Momin, B. F. and Mujawar, T. M.: Vehicle detection and attribute based search of vehicles in video surveillance system, 2015 International Conference on Circuits, Power and Computing Technologies [ICCPCT-2015], pp.1–4 (online), DOI: 10.1109/ICCPCT.2015.7159405 (2015).

- [17] Kisantal, M.,Wojna, Z., Murawski, J., Naruniec, J. and Cho, K.: Augmentation for small object detection (2019).

- [18] Kikuta, K., Murata, K. T. and Murakami, Y.: A Daytime Smoke Detection Method Based on Variances of Optical Flow and Characteristics of HSV Color on Footage from Outdoor Camera in Urban City, Fire Technology, Vol.60, No.3, pp.1427–1452 (online), DOI: 10.1007/s10694-023-01522-4 (2024).

- [19] Kikuta, K., Murata, K. T. and Nishimura, T.: Sakurajima volcano monitoring using visual IoT and image analysis, The Volcanological Society of Japan 2024 fall meeting (2024).

- [20] Murakami, Y., Murata, K. T., Kikuta, K., Niimi, M., Kawanabe, T., Mizuhara, T., Aoki, T., Yamamoto, K., Nagatsuma, T., Kobayashi, K., Fukuzawa, K. and Pavarangkoon, P.: An Image Stabilization Technique for Long-durational Outdoor Footages Obtained by Visual IoT Systems, 2021 24th International Symposium on Wireless Personal Multimedia Communications (WPMC), pp.1–6 (online), DOI: 10.1109/WPMC52694.2021.9700467 (2021).

- [21] Cai, Y., Liu, Z., Wang, H., Chen, X. and Chen, L.: Vehicle Detection by Fusing Part Model Learning and Semantic Scene Information for Complex Urban Surveillance, Sensors, Vol.18, No.10 (online), DOI: 10.3390/s18103505 (2018).

- [22] Bochinski, E., Eiselein, V. and Sikora, T.: High-Speed tracking-bydetection without using image information, 2017 14th IEEE International Conference on Advanced Video and Signal Based Surveillance (AVSS), pp.1–6 (online), DOI: 10.1109/AVSS.2017.8078516 (2017).

- [23] Bewley, A., Ge, Z., Ott, L., Ramos, F. and Upcroft, B.: Simple online and realtime tracking, 2016 IEEE International Conference on Image Processing (ICIP), pp.3464–3468 (online), DOI: 10.1109/ICIP.2016.7533003 (2016).

- [24] Yaghoobi Ershadi, N.: Improving vehicle tracking rate and speed estimation in dusty and snowy weather conditions with a vibrating camera, PLOS ONE, Vol.12, No.12, pp.1–17 (online), DOI: 10.1371/journal.pone.0189145 (2017).

- [25] Alcantarilla, P.F., Nuevo, J. and Bartoli, A.: Fast Explicit Diffusion for Accelerated Features in Nonlinear Scale Spaces, British Machine Vision Conference, (online), available from〈https://api.semanticscholar.org/CorpusID:8488231〉(2013).

kazu.kikuta@nict.go.jp

Kazutaka Kikuta received the M.S. degree in 2014 and Ph.D. degree in 2017 from the University of Tokyo, Japan. He is currently working on image analysis using visual IoT at the National Institute of Information and Communications Technology.

Ken T. Murata received PhD of Engineering, Kyoto University in May 1995; Researcher, Faculty of Engineering (Department of Information Engineering), Ehime University in April 1995; Associate Professor, Center for Information Technology, Ehime University in April 2007; Director, Electromagnetic Measurement Research Center, National Institute of Information and Communications Technology in December 2008; Research Executive Director, General Testbed Research and Development Promotion Center, National Institute of Information and Communications Technology in April 2016. Specially appointed professor at Shinshu University and visiting professor at Kyoto University. Currently engaged in spatio-temporal data GIS platform project and resilient natural environment measurement project.

Tsutomu Nagatsuma received PhD of Science, Tohoku University in March 1995; Researcher, Communications Research Laboratory of the Ministry of Posts and Telecommunications (currently the National Institute of Information and Communications Technology, NICT) in April 1995; Currently, a Senior Researcher at the Quantum ICT Design Initiative, Quantum ICT Collaboration Center, NICT, concurrently serving as Research Manager of the Space Environment Laboratory, Electromagnetic Wave Propagation Research Center.

Junji Tokairin graduated from the Faculty of Engineering, Iwate University, in 1981. Retired from Fujitsu Limited in 2018 after engaging mainly in mobile communications and mobile device development. Since 2020, he has been affiliated with the National Institute of Information and Communications Technology (NICT) and is currently with the Resilient ICT Research Center.

Yuki Murakami finished the Graduate Department of Computer and Information Systems, the University of Aizu, in 2022. Since 2020, he has been affiliated with the National Institute of Information and Communications Technology (NICT) and is currently with the ICT Testbed Research and Development Promotion Center.

採録日 2025年12月9日