The Development and Practice for Exhibiting an HCI Device in a Public Space—A Case Study of Sight: A Sonification Device Towards a Visual Perception without Eyes—

1. Introduction

As digital technologies spread throughout the world, Human-Computer Interaction (HCI) technologies have attracted the interest of not only professionals but also the general public. Therefore, it has become a serious concern for researchers and practitioners in the field of HCI to present developed technologies to society in a form other than a scientific paper. One of the most effective presentation media is demonstration exhibitions (hereinafter referred to as “exhibitions”).

Exhibitions by researchers and practitioners have recently become popular in school exhibitions, solo exhibitions, museums, and contemporary art galleries. Exhibitions enable us to present developed technologies in an easy-to-understand manner through a hands-on experience. However, as people visiting exhibitions are unlikely to have adequate background knowledge related to the exhibited technologies, various trials and errors (hereinafter referred to as “practices”) are necessary to accurately convey the essence of the presenters' ideas through a one-shot hands-on experience.

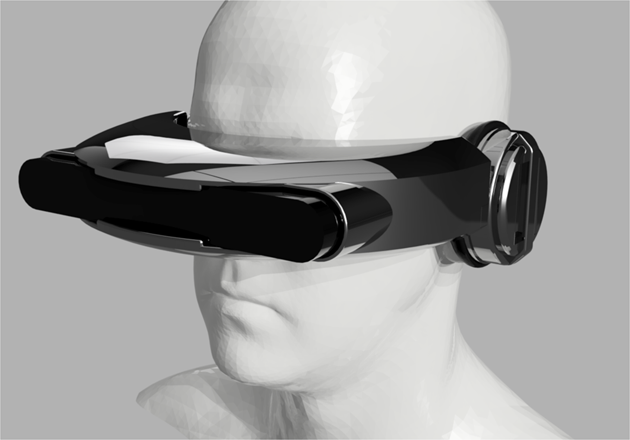

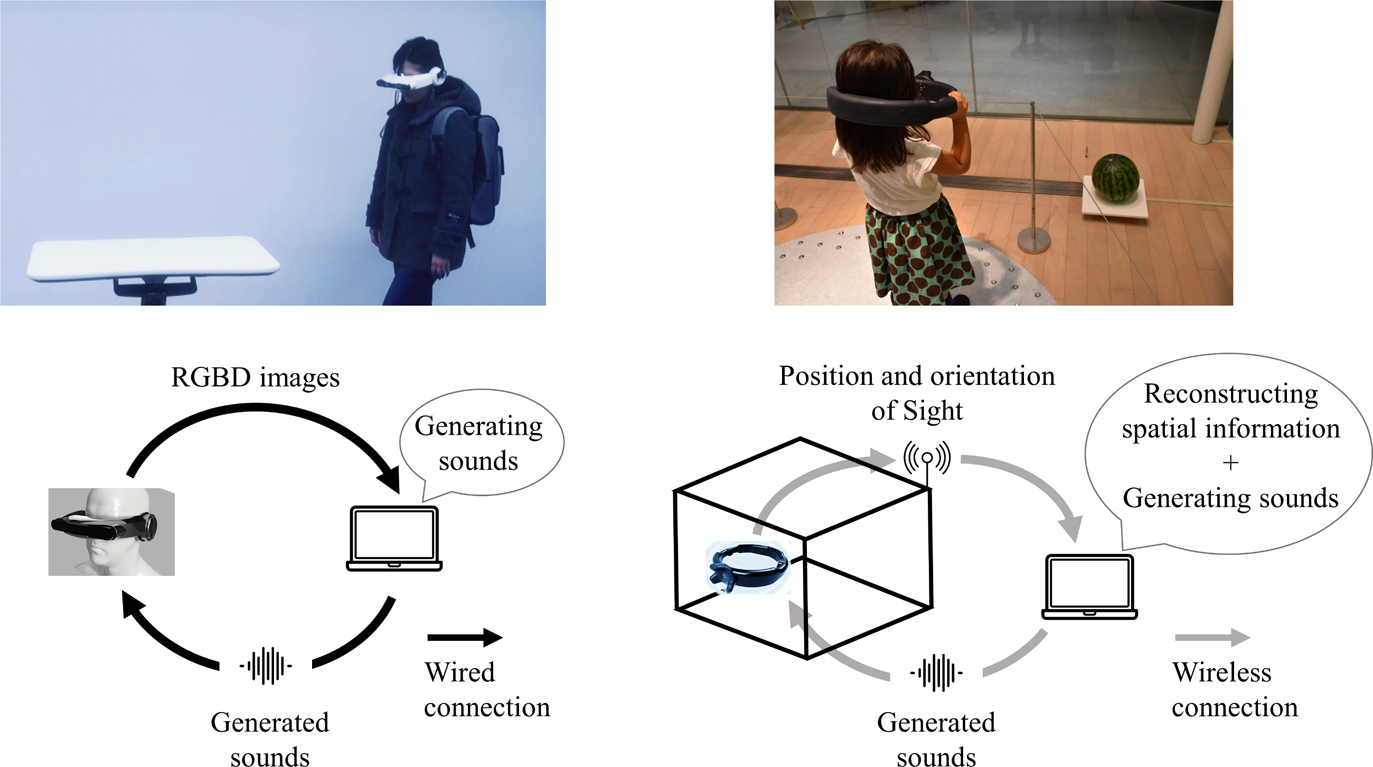

As a self-initiated project by four students with backgrounds in design and HCI, we have been developing Sight, a sensory augmentation device for realizing “vision” through sounds, without relying on visual information (Fig. 1). As a part of this project, we have collected practices for exhibiting Sight at museums, art galleries, and universities [1]-[7]. Through the project, we became aware that useful practices and know-hows for such exhibitions have not been well documented in a reproducible form.

The purpose of this paper is to share our practices along with our thinking process, taking one of our past exhibitions as an example. We explain a three-month exhibition at the 21st Century Museum of Contemporary Art, Kanazawa (hereinafter, “21st museum”) in 2017. In this paper, we organize our practices using a tool called “Thinking Process Development Diagram (TPDD),” [8] which is used in engineering design. We believe that these organized practices are widely useful for designing demonstrations of HCI-related developments.

2. Overview of Our Exhibition

In this section, we introduce the overview of the exhibition at the 21st museum. First, we introduce Sight and the authors' motivation. Next, we explain the details of the exhibition.

2.1 Sight Project

Perceptions such as vision and hearing are generated based on information sensed by sensory organs such as the eyes and ears. Therefore, it is of interest whether animals and humans can obtain new perceptual experiences by feeding the brain with environmental information acquired through a new sensing organ. We have been developing Sight as such a new organ (Fig. 1). Sight is a sonification device that generates sounds according to the spatial information in front of a user. The question underlying the development of Sight is that “if we could convert spatial information into sounds and adjust ourselves to listening to them, could we have the sensation of searching for food and flying around by sounds, like dolphins and bats?” In this paper, we refer to the activities related to Sight as the Sight project below.

We believe that the new perceptual experiences with Sight could be applied to our daily lives in a variety of situations. For example, Sight could be used as a device to assist visually impaired people, to invent new games using sounds, or to create new interactions between visually impaired and sighted people, like blind soccer. However, the Sight project does not aim to develop a practical technology. Rather, the authors hope to understand the various ways of perception in the process of pursuing the possibility of realizing visual experiences through sounds. In addition, through exhibitions, we wish to provide an opportunity for individuals with different ways of perception to focus on each other's perceptual worlds. For these reasons, the Sight project has been actively presenting Sight through various exhibitions with hands-on sessions.

2.2 Overview of the Sight Exhibition at the 21st Museum

The exhibition at the 21st museum was held in an indoor room as a free-of-charge exhibition from early August to early November in 2017 (Fig. 2). The exhibition space was open from 10:00 to 18:00 (to 20:00 on Fridays and Saturdays), except on museum holidays. The 21st museum, which receives more than 2 million visitors annually, attracted many visitors even to the free-of-charge exhibition space. The number of visitors to the Sight exhibition space during the exhibition period was 70,729 in total [9].

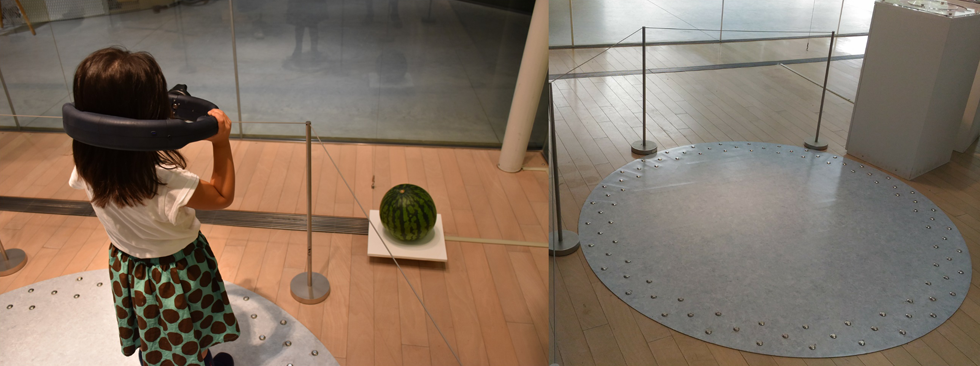

In this exhibition, objects with various appearances were placed in the space as the targets for sonification. We designed an experience in which a visitor looked around the exhibition space with Sight, and heard stereophonic sounds from Sight that were generated based on the positional relationship between the visitor and the environment (i.e., objects and walls). Since the previous version of Sight (Fig. 1) was not suitable to be experienced by a large number of people as described below, we redesigned Sight for this exhibition (Fig. 2). The new Sight was grasped with both hands and put through the head. The visitors experienced sounds from the speakers installed on both sides of Sight, while their eyes were shielded by the housing of Sight.

The exhibition room was covered with glass walls, allowing visitors to see the interior from the outside. To take advantage of this feature, we placed the objects close to the walls so that they could be easily seen from outside. In addition to a hands-on space, we also posted explanatory posters on the walls. The visitors experienced Sight along the following flow line:

(1) Observing the objects through the glass walls before entering the exhibition room to experience the vision through the eyes.

(2) Observing the objects with Sight after entering the room to experience the sound-based vision through Sight.

(3) Viewing posters to understand the concept of the Sight project.

(4) Observing the objects through the glass walls again after leaving the room to experience the difference in the perception of “vision” before and after the experience.

In addition, we collected feedback from the visitors using tablet devices placed near the exit.

3. Visualization of the Thinking Process using an Engineering Design Method

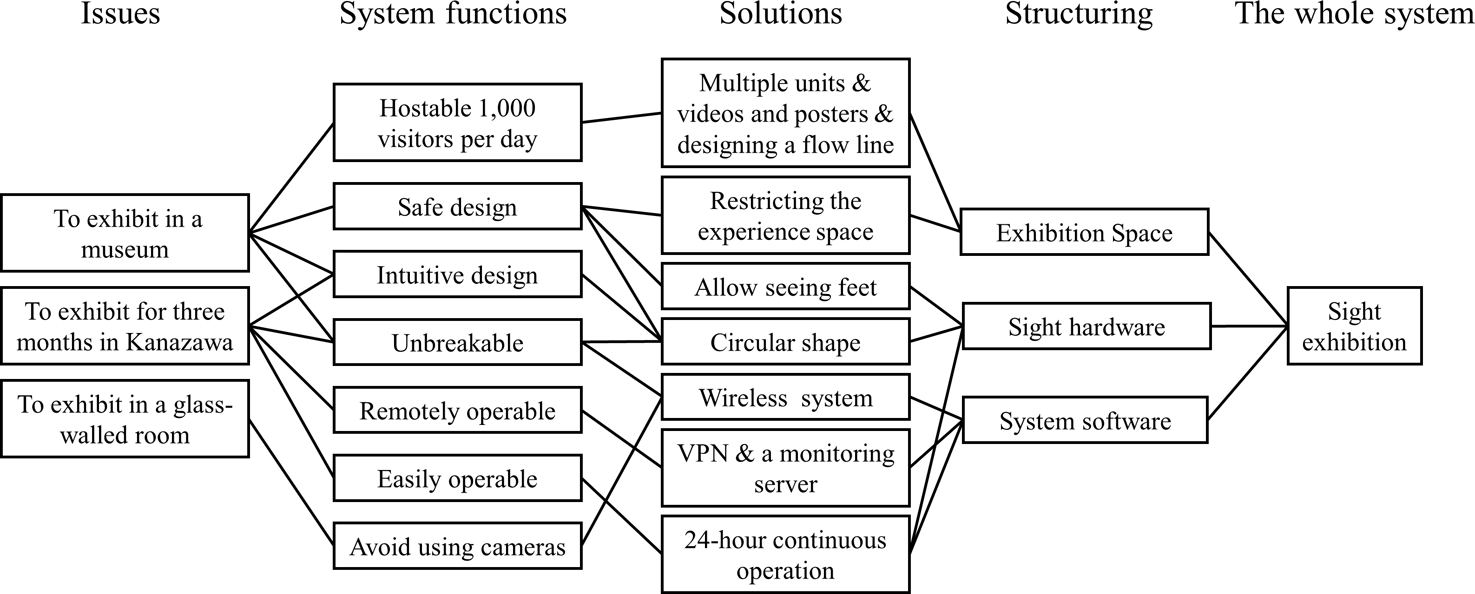

In this section, we visualize the thinking process to realize the exhibition described in Section 2. In the field of engineering design, a diagram called Thinking Process Development Diagram (TPDD) is often used to design a product that satisfies the developers' requirements of “we want to achieve this” [8] (Fig. 3). In this paper, we organize the issues and our practices for implementing this exhibition by applying a TPDD to the exhibition design.

The first step of constructing a TPDD is to identify the issues related to “what we want to achieve.” The next step is to identify system functions (i.e., elemental functions or requirement specifications) necessary to solve the problem. The third step is to consider the solutions (i.e., design solutions or practices) to realize the required functions. The last step is to consider a structure that incorporates the solutions. In this way, a design solution (e.g., an exhibition design) to solve the issues is given. In this section, we explain our thinking process following a TPDD.

3.1 Organizing the Issues

The following is a list of the exhibition conditions at the 21 museums that we knew at the start of the project:

- ・About the museum: the museum is located in Kanazawa city, geographically distant from Tokyo, where the authors are based. The visitors are diverse in gender, age, and nationality. The number of visitors to the museum is about 2 million a year.

- ・Exhibition space: a 6 x 9 m glass-walled space located near the outdoor space. The room has one entrance. The two walls are arranged so that visitors can easily see the inside of the room (Fig. 2). Since the room is located close to the edge of the building, the brightness of the room changes depending on the time of day and weather conditions.

- ・Schedule: the preparation period is four months. The exhibition period is three months. The exhibition room is open during the opening hours of the museum (i.e., 8 or 10 hours), except on days when the museum is closed.

Based on these conditions, we identified the following issues:

- ・We want to exhibit Sight in a museum: the 21st museum receives an unspecified number of people who have a variety of expectations. Therefore, we needed to create an exhibition that was not only safe but also easy to understand.

- ・We want to exhibit the system for three months in Kanazawa: as the system would be exhibited for a long period in a physically remote location, we needed to maintain and operate it without being there directly. In addition, we needed to monitor the status remotely and fix problems as necessary.

- ・We want to exhibit Sight in a glass-walled room: as the device was designed to sense visual information, optical conditions were an important factor in designing the device.

3.2 Organizing System Functions

We considered that the following functions should be realized to solve these three issues:

- ・Hostable 1,000 visitors per day: based on past exhibitions at the 21st museum, it was estimated that more than 1,000 visitors per day would visit the Sight exhibition space. Therefore, we needed to devise a way to host more than 100 visitors per hour.

- ・Safe and intuitive design: the visitors include children and elderly people. In addition, many visitors do not understand Japanese. The hands-on experience must be safe and easy-to-use for all of these visitors.

- ・Unbreakable: the Sight hardware should take into account the risk of breakdown due to repeated use, dropping, and mishandling. In addition to that, the Sight software must be easily fixed in the event of a failure.

- ・Remotely operable: in order to maintain, operate, and restore the system from a physically remote location, we needed to monitor and control the system status remotely.

- ・Easily operable: the system must be easily operated by museum staff who do not have expert knowledge, in a case where a remote operation is not possible.

- ・Avoid using cameras: our previous Sight system (Fig. 1) was equipped with an infrared depth sensor and an RGB camera sensor. These sensors do not work well in a glass-walled room for several reasons. First, the depth sensor measures the distance to an object using the time it takes for infrared light to reflect and bounce back from the object. Glass walls of the exhibition space transmit light, making it difficult to measure the distance to them using the depth sensor. Secondly, the optical condition of the glass-walled room is variable during the day. Therefore, we wanted to avoid using RGB cameras, which are easily affected by a light source. For these reasons, we abandoned optical sensing and replaced it with external positional sensing. We considered this approach acceptable because our project aimed to explore the possibility of a vision-like experience induced by sound stimuli and not to focus on visual processing.

3.3 Organizing Solutions

In this section, we describe solutions that we practiced for realizing these system functions.

3.3.1 Practices for Hosting 1,000 Visitors per Day

How can we provide an exhibition for 100–1,000 visitors every day? We addressed this problem by operating multiple units, using videos and posters, and designing a flow line.

(1) Operating multiple units

Visitors need to spend a certain amount of time in the hands-on experience to understand the content. However, extending the hands-on time per person will reduce the number of people who can experience the exhibition. We installed two devices to mitigate this trade-off. The number of devices was decided based on the size of the experience space, the complexity of the system, and the cost of the device components.

(2) Using videos and posters

Even though the number of units increases, there is still a limitation in the number of people who can experience Sight. In addition, if the waiting time is too long, some people may be hesitant to spend enough time because they are worried about the people behind them. Therefore, we prepared explanatory videos and posters in the exhibition space so that visitors could understand the purpose of Sight and leave with a sense of satisfaction even if they could not spend enough time for the hands-on (Fig. 4). We also displayed the previous version of Sight to illustrate the development of the Sight project. The text was written in Japanese and English.

In this exhibition, we set three levels of goals for the experience to be provided to the visitors: (1) to understand that wearing the device hides the field of vision and allows them to hear sounds, (2) to notice that the sounds change depending on the shape and form of the object they are looking at, and (3) to grasp what kind of information about the shape is converted into sounds, and to imagine the experience of “seeing” with the sounds. By setting these different goals, we aimed to provide several explanations according to the visitors' motivation, rather than aiming to provide a complete experience to all visitors.

(3) Designing a flow line for a smooth experience

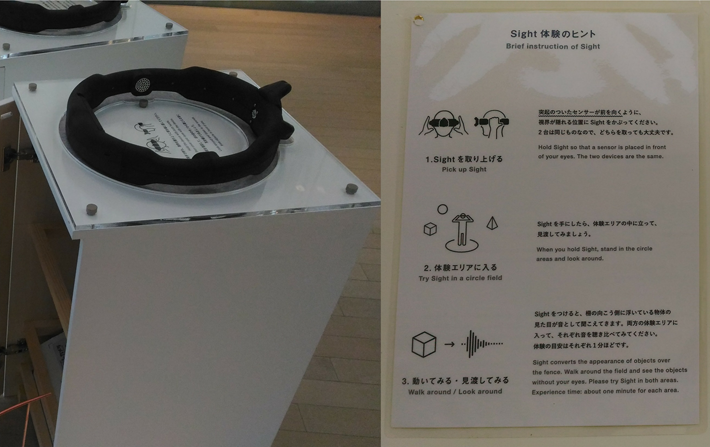

Designing a flow line is critical for an efficient experience. In this exhibition, we considered whether to provide the hands-on experience or the posters/videos first. As we wanted visitors to experience the devices without any preconceptions, we designed the flow line so that they could experience Sight first and be convinced by the posters/videos later. In addition, instructions on how to use Sight were placed in the waiting line to help visitors correctly use it without any prerequisite knowledge (Fig. 5).

3.3.2 Practices for Safe Design

Due to the nature of the concept, the previous version of Sight was designed to completely shield users' eyes. This design, however, is not suitable for an exhibition without instructors because walking around without seeing feet is not safe. For a safe experience, we devised the following two solutions.

(1) Designing a device that allows the user to see his or her feet

We designed a device that does not completely shield the eyes to enable visitors to see their feet by peripheral vision and move around safely while making the most of the exhibition space. As a solution, we adopted a circular shape with an inner diameter of 30 cm. Since the device was handled with both hands by the user, the design allowed the user to start, pause, and stop the experience at any time with their intentions (Fig. 6, left).

(2) Restricting the experience space

In addition to the device shape, we designed the floor of the experience space. We prepared a boundary line and a colored sheet (Fig. 6, right) that indicated the experience area to prevent visitors from accidentally deviating from the area and bumping objects while wearing Sight. In addition, point rivets were placed near the boundary of the sheet so that visitors could notice that they were near the boundary of the experience area only by feeling their feet.

3.3.3 Practices for an Unbreakable System

(1) Devising the device shape

To improve the hardware robustness, we considered updating the shape of Sight, which was goggle-shaped (Fig. 1). Having a hinge structure such as goggles tends to cause stress on the hinge part, which leads to failure. In particular, when a goggle is worn by a child with a small head or an adult with a large head, the hinge part is subjected to high stress because the part is rotated to fit the head. In fact, from our past experiences (i.e., practices), we knew that the hinge part made by a 3D printer could be damaged when Sight was used by about 100 people a day.

To overcome the vulnerability problem, we redesigned Sight into a circular shape without any movable parts, which was also a practice for safe design. The circular shape is robust against external forces as it does not have a stress-concentrating structure like a hinge. The circular shape also has advantages such as not falling off because it is caught on shoulders even if a user accidentally drops the device, and not being used in unexpected ways because of the intuitive appearance. These features contribute to the robustness of the hardware. In addition, the circular shape can be constructed by combining arc-shaped parts, which can reduce the number of different parts molded by a 3D printer compared to goggles. Fewer parts make it easier to assemble them and to prepare spares.

The circular shape also contributes to the comfort of the experience. Because of its large inner diameter of 30 cm, the device does not contact the face of a user during use. This feature eliminates factors that may cause uncomfortable experiences, such as steaminess, make-up coming off, and messing up hairstyles. Providing a comfortable experience is an important issue in an environment where a large number of visitors come in the summer season.

Other ideas for a robust shape that fits various head sizes are introducing a sponge sandwiched between the device and the face (e.g., head-mounted displays) or using helmet-like hardware. However, from the standpoint of comfort, we considered that a circular shape was appropriate. In addition, designs not touching a user's face significantly reduce the risk of virus transmission through the device's surface.

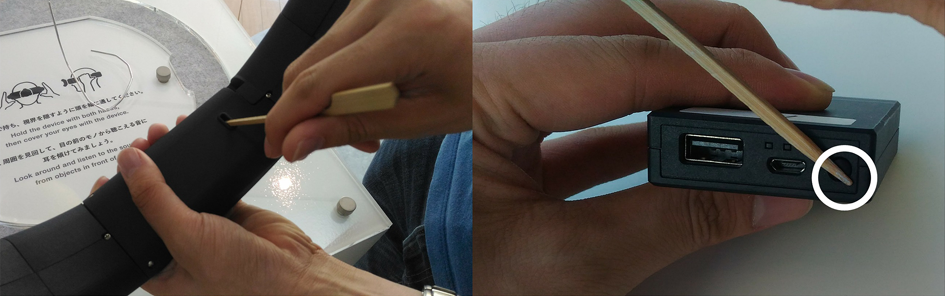

(2) Wireless system

The previous version of Sight ran with a laptop computer, which computed the sound conversion, connected to the device by USB wires (Fig. 7, left). However, physical wiring can cause various hardware breakdowns. In addition, a wired device is not user-friendly as users have to carry a backpack containing a laptop computer. To overcome these issues, we designed a wireless system (Fig. 7, right). Sight wirelessly sent its position and posture to a computer, and the generated sounds were wirelessly transmitted to the device via Bluetooth.

The advantages of a wireless system are: (1) to increase the freedom of movement of users, (2) to prevent unexpected accidents such as getting caught in wires, and (3) to prevent from wearing out or breaking due to overuse. On the other hand, the disadvantages are: (1) limited communication bandwidth and speed compared to wired connections, and (2) the inability to constantly supply power to devices using wires. As safety and robustness were the top priorities, we decided to compensate for these disadvantages while adopting the wireless setup.

First, as for the bandwidth issue, we decided to use a commercially available infrared-based motion tracking system [10] that captured the position and angle of a tracking sensor attached to Sight. Using the motion tracking system allowed us to achieve a lightweight communication compared to sending images since the number of transmitted parameters was limited to six describing the position and orientation of the tracking sensor. The system reconstructed the spatial information in front of a user, based on this transmitted information and the positional information of the objects and walls registered in advance. The system then generated stereophonic sounds and sent them to the speakers placed on the device through a Bluetooth connection. Note that these calculations assume that the positions of the exhibited objects are fixed in the room. Using a motion tracking system was also a practice for avoid using cameras (Fig. 3).

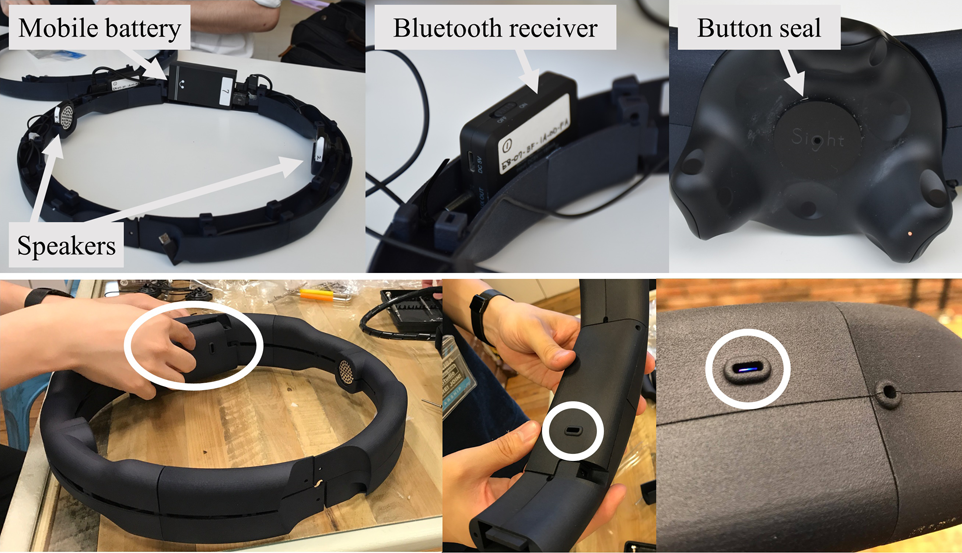

Secondly, as a countermeasure to the power supply problem, we embedded a mobile battery that powered devices during the daytime to achieve 10 hours of continuous operation. We considered two methods for charging the mobile battery: wireless charging using the Qi standard and wired charging using a USB cable. Although wireless charging eliminates a charging operation by charging Sight on its display stand when not in use, it requires a large number of components. Therefore, we adopted wired charging at night (Fig. 8).

3.3.4 Practices for a Remotely Operable System

(1) Remote monitoring system

To facilitate system status monitoring and recovery operations, we introduced a remote monitoring system. Specifically, we used a private VPN service to access computers running on the network in the 21st museum through remote desktop and SSH connections.

In addition to the accessing capability, we monitored the system remotely by uploading the images of the room and the sensors' status information (i.e., their locations and battery levels) to a separately prepared server. To capture the images, we attached a camera near the ceiling at an angle to view the whole room. A Raspberry Pi connected to the camera captured images every minute and uploaded them to the server with timestamps. The sensors' status information was uploaded from the computer that received the information. These monitoring capabilities helped to identify the cause of problems when they occurred.

3.3.5 Practices for an Easily Operable System

(1) 24-hour continuous operation and preparation of a manual

As the 21st museum was physically located far away from the authors, we were unable to be there throughout the exhibition. In a long-term exhibition where artists cannot operate the system directly, they need to delegate the operation to a third party. In such a case, it is important to design a simple and easily operable system.

In this exhibition, we requested the museum staff to perform several on-site operations. To minimize the number of operations, we decided to keep the Sight system running and asked the staff to (1) insert a USB cable to charge Sight after the museum closed, and (2) unplug a USB cable and check if the system was running before the museum opened. If there was any problem with the system, we asked the staff to call us to fix the problem remotely. In addition, we prepared an operational manual and held a training session before the exhibition started.

3.4 Structuring

The practices described in Section 3.3 were combined into a single system at the structuring stage of the TPDD (Fig. 3).

3.4.1 Structuring of the Exhibition Space

We structured the exhibition space so that visitors could follow the flow line designed in Section 3.3.1. Specifically, the exhibited contents (i.e., experience spaces, posters, and videos) were arranged clockwise from the entrance in the order of preferred presentation; arranging the contents in one-stroke order prevented the human flow from stagnating. The direction of the route was decided based on the location of the experience space, which we desired visitors to visit first. When the flow line spontaneously breaks, the museum staff guided visitors.

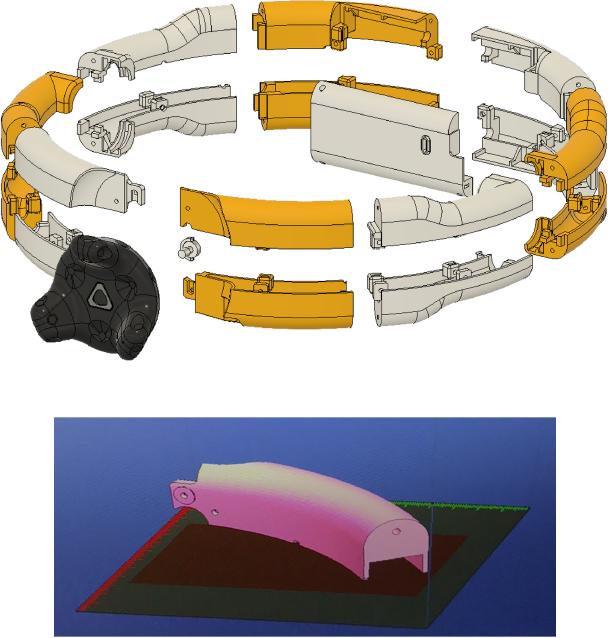

3.4.2 Structuring of the Sight Hardware

Since we had only four months to prepare for the exhibition, we mainly used commercial products and designed the housing of Sight to hold them (Fig. 9, top images). Note that the Bluetooth receiver shown in the figure is a device to receive the generated sounds transmitted from the computer. We also designed the housing to enable replacing the batteries and checking the status of the devices without disassembling (Fig. 9, bottom images). As a result of considerations for the aesthetic design of Sight, the tracking sensor was placed in a way that its power button was exposed to the outside. Thus, we protected the button with a thin plastic plate with a small hole for a thin rod to pass through (Fig. 9, the top right image). The button sealing allowed us to access the button while preventing the user from touching it accidentally.

3.4.3 Structuring of the Sight Software

The Sight system consisted of two separate programs: (1) programs that obtained the position and posture of the tracking sensor and sequentially calculated its relative position to the object, and (2) programs that generated the 3D sound corresponding to the relative position. Each of them was built on top of existing third-party software [11]-[13], and was run on different machines to distribute the CPU load. Open Sound Control protocol, which is one of the most popular protocols for networking sounds in real-time, was adopted to communicate between the machines. As mentioned in 3.3.5, the Sight system was assumed to run continuously. Nevertheless, a batch file was prepared so that anyone could easily restart the system when a program crashes.

4. Additional Practices

In Section 3, we explained our thinking process for designing the exhibition using a TPDD. In an actual exhibition, various unforeseen problems arise. Therefore, in this section, we explain some other practices that were found during the exhibition as well as several unorganized practices that we believe are worth sharing.

4.1 Practices for Developing HCI Devices

This section describes practices that are not directly related to our exhibition design but may be useful for developing HCI devices in general.

(1) Molding with a black material

From our past experiences (i.e., practices), we knew that the devices would naturally get dirty and the paint would come off due to a lot of human touches. Therefore, we molded the device using a black material.

(2)Locations to place computers

As we used Bluetooth communications for receiving the tracking information and transmitting the sounds, we needed to place the computers as close as possible to the experience area, and in a place where visitors could not touch them accidentally. We installed the computers inside the Sight's display stand (Fig. 5), and were powered from a power line on the floor. To prevent heat buildup inside the stand, we created gaps at the top and bottom of the side of the stand and generated airflow from the bottom to the top by turning a cooling fan inside.

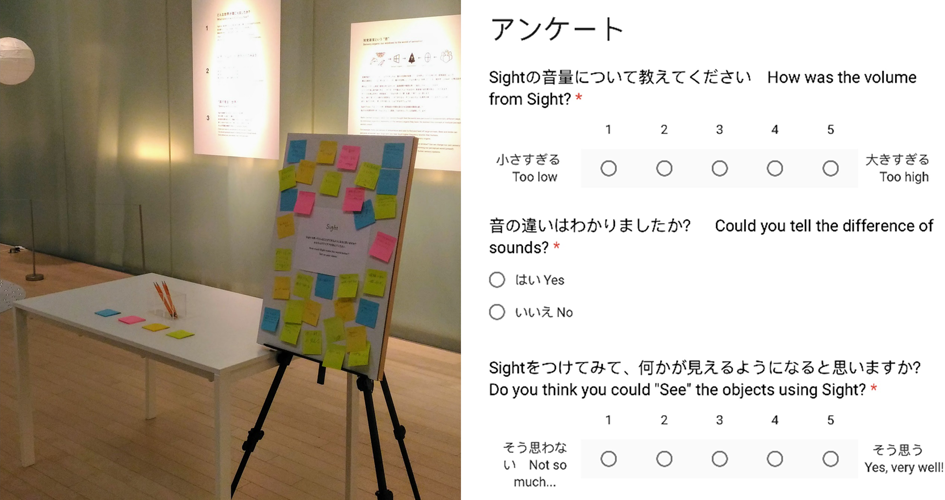

(3) Collecting opinions using a questionnaire

To collect opinions about the experience, we placed sticky notes and a questionnaire application on iPads and set them up in the room (Fig. 10). By analyzing the responses to the questionnaire, we were able to gather a variety of opinions about the application of the device.

For the iPad questionnaire, we utilized Google Forms so that the responses could be monitored remotely. As an unexpected effect of this method, we were able to quickly notice the problems of the exhibition by periodically checking the responses to open-ended fields. For example, a response of “the sound does not change.” helped us notice a problem with the tracking sensor. We learned that a system for monitoring users' feedbacks is effective for quickly fixing problems in remote exhibitions.

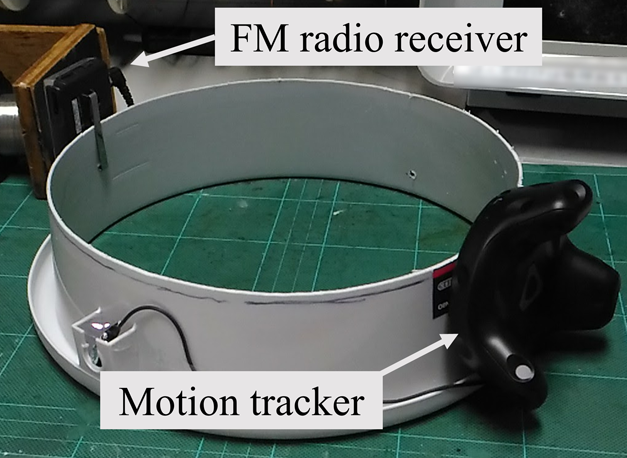

(4) Prototyping

We had to build the system from scratch within four months of preparation for the exhibition. Although the schedule was relatively tight, we made several prototypes during the preparation period to verify the feasibility of the practices we had devised. For example, to check the shape of the circular device, we cut a commercially available polyethylene bucket and attached the tracking sensor to it (Fig. 11).

Prototyping is a useful practice for deciding the best solution among several ideas. For example, for wireless audio transmission, we initially planned to adopt an analog communication method using an FM transmitter and an FM radio receiver (Fig. 11). However, the prototyping revealed that the analog method introduced noises. Because of the difficulty in distinguishing between a sound intentionally generated by the Sight system and the noise introduced by external factors, we decided to adopt a digital communication method using Bluetooth.

(5) Know-hows for modeling devices using a 3D printer

To facilitate prototyping, we designed the 3D model of Sight to be printed using a home-use 3D printer [14]. The fewer the parts of a device, the simpler the assembly procedure becomes. Therefore, we divided the model so that the number of parts would be as small as possible, while keeping the parts' sizes printable by the 3D printer (i.e., 140 mm x 140 mm x 135 mm) (Fig. 12).

While we tried to reduce the number of parts as described above, a typical, stacked-type 3D printing can cause warping of parts due to resin shrinkage, especially when they are molded too large. We often encountered this problem when molding Sight. As a solution, we dipped the molded parts in boiling water and fixed them to a board with rubber bands to correct the warping. As a practice, it is recommended to avoid molding parts that are too large, while considering the tradeoff between the size of parts and the number of parts.

(6) Considerations for obtaining visitors' personal information

As noted above, we collected responses to the questionnaire and monitored the exhibition room remotely through the images. In such a scenario, it would be necessary to take care when collecting personal information that may lead to the identification of visitors. In the exhibition, we did not collect any information that could identify the visitors, such as their names, sexes, ages, through the questionnaire. The images sent from the remote monitoring system were uploaded to a server accessible only to the members of the Sight project. In addition, we did not retain the images by overwriting the old images on the Raspberry Pi and the server each time a new image was acquired.

4.2 Problems Found during the Exhibition

(1) Low sound volume

The circular shape, which was intended to be safe and robust, functioned as expected. To the best of our knowledge, there were no accidents related to falling or dropping Sight during the exhibition period. In addition, the hardware of Sight did not break down. On the other hand, the large diameter of Sight (i.e., 30 cm) caused the distance between the speakers and the ears, resulting in the low volume of the sounds to be heard. This problem was more pronounced when the head of the user was small or when ambient sounds were loud due to crowds or announcements in the museum. In these cases, the users placed their ears close to either the left or right speaker and gave up the sounds from the opposite speaker, and thus the stereophonic effect was not obtained. This observation suggests the importance of assuming the physicality of users and the various environments. In our case, we should have designed the inner diameter of the device to be smaller, or prepared devices with several different diameters.

(2) Maloperation by children

Although we activated a parental control function of the iPads to prevent unexpected operations (e.g., shutdown), there was a case in which the application shut down and could not be recovered because the iPads were somehow mishandled by children. In addition, there was a case in which a child entered the prohibited area while the guardian was not looking. We learned that systems that may be used by children should not only be safe, but should also take into account the possibility of unexpected use.

(3) Nighttime power outage

There was a case where the batteries of devices and computers ran out due to a nighttime power outage as part of routine maintenance. In another case, the system was disconnected from the network due to the maintenance of network equipment. We learned that we should keep in mind the possibility of power outages and the maintenance of network equipment in public facilities.

(4) Finding bugs in a long-time operation

A program for generating sounds suddenly crashed a few weeks after the exhibition started. The cause of the crash was found to be that the program had cached all of the generated sound logs, which had grown to 500 GB, putting pressure on the memory. We learned that we should pay attention to the existence of bugs, such as a memory leak and unintended data caching, that may become apparent after a long period of operation.

(5) Problems related to the specifications of commercial products

Sight consisted of a commercial speaker, a mobile battery, a tracking sensor, a Bluetooth receiver, and cables. Because we did not fully understand the specifications of these products, we encountered many unexpected problems in structuring the system (Fig. 3). For example, we were not aware that the mobile battery was designed to be turned off when the power cable was disconnected. As a result, the battery was turned off by the disconnection operation in the morning (Fig. 8) and did not function at all. To solve this problem, we asked the museum staff to press the power button, which was an additional operation (Fig. 13). As another example, the cables were thicker than expected and did not fit into the housing of Sight, so we had to make room by sanding the molded device.

As a practice in using commercial products, we learned that we should examine their specifications and understand their behaviors at the prototype stage before structuring them.

(6) Running out of sensor batteries due to insufficient current

In this exhibition, to simplify the structure of Sight, we prepared a branching USB wiring for powering the tracking sensor and the Bluetooth receiver simultaneously. However, immediately after the exhibition started, either one of the devices frequently ran out of battery during the day. Debugging using a USB ammeter revealed that the amount of simultaneous power supply was subject to the cables used. Consequently, the problem was solved by replacing the cables. We learned the importance of confirming the operation of a system by actual measurement, even if it is theoretically feasible in terms of device specifications.

4.3 Practices Related to the Contents of Sight

In this paper, we have shown the practices for designing an exhibition that provides hands-on experiences of the wearable device to more than several hundred people per day. Finally, we introduce several practices related to the development of Sight.

(1) Visual and auditory parameters to be converted

In considering the contents of the hands-on experience, we focused on providing an easy-to-understand experience to convey the concept of Sight to people in a short time. To this end, we attempted to devise a reasonable mapping rule for converting visual information into sound.

We considered color, shape, and texture as parameters that may characterize the objects' appearance, and assigned these parameters to pitch, amplitude, and timbre of the sound, which are the independent components of sound. As the specific conversion algorithms for the parameters are out of this paper's scope, they are not explained here. Through prototyping, we confirmed that the relationship between the objects' appearances and the converted sounds was consistent with our intuition.

After the exhibition, we examined the participants' evaluation of the experience by summarizing the responses to the iPad question, “What kind of scenery did you feel from the sounds?” As a result, 14.0% of the respondents answered the color of the object, 40.1% answered the shape of the object, and 32.7% answered the texture of the objects (valid responses N=5,139); 63.1% of the respondents answered one of the three parameters. In addition, from the question on the experience time of Sight, it was found that 97.4% of the respondents paid less than 5 minutes for their experience (valid responses N=6,007). These results indicate that more than half of the participants were able to identify at least one of the targeted visual parameters in a limited experience time. Therefore, although the parameters were selected subjectively, we believe that our parameter selection was successful to some extent.

(2) Object design

To enable users to easily grasp the relationship between the visual and auditory parameters, we devised a way of arranging the objects (See Fig. 2). On the left side of the experience space, we placed primitive objects made of paper with different colors and shapes. On the right side of the experience space, spherical objects of different textures were placed. The spherical objects were selected in consideration of the regional characteristics and the season of the exhibition to entertain the visitors. For example, as Kanazawa is famous for Sake brewing and faces the sea, cedar and glass balls were chosen, representing the locational information. As another example, a watermelon was installed in the first half of the exhibition, and a pumpkin was installed in the second half. As far as we observed, children, in particular, were often interested in these objects. We, therefore, consider that the selection of the objects was successful.

(3) Considerations for the 3D modeling of Sight

In modeling Sight, we aimed not only to design a safe device but also to provide a user-friendly UI/UX while maintaining the aesthetics of Sight. In particular, considering the fact that Sight is a wearable device that users hold it in their hands, we paid attention to the following points in the modeling (see Fig. 12).

- ・We wanted Sight to be as light as possible since hands should support it. Therefore, we tried to make its size as compact as possible under the constraint of “a circular shape that does not contact the face.” To achieve this, we arranged the internal cables to be as concise as possible to minimize the redundant space. Our efforts to reduce the weight also contributed to reducing the production cost since less printing filament was used. We believe this practice was successful because we often observed children experiencing Sight without fatigue (see Fig. 6).

- ・In relation to the above, we considered the volume of each part model and the mounting locations of the devices in order to balance the weight of the front, back, left, and right sides so that the hands could support Sight without additional effort other than lifting it.

- ・We designed the grasping part to be thin so that the user could intuitively hold Sight. In addition, we designed Sight to have the left and right speakers positioned closest to the ears when the user holds Sight correctly.

- ・As an additional effort to prevent the device from being flipped back and forth, up and down in use, we provided instructions on how to use Sight (Fig. 5). In addition, to emphasize how to hold it, an acrylic plate on top of the Sight's display stand was engraved with a shape that could fit Sight only when it is placed in the correct posture. Note that Sight has a different shape of the ground surface when flipped upside down due to the shape of the tracking sensor.

- ・The parts needed to be firmly assembled to endure repeated use, while allowing Sight to be quickly disassembled when there was a problem with the devices inside. Therefore, we modeled the parts so that they could be assembled with screws, without adhesives or other irreversible methods. While we were able to easily disassemble Sight, it never spontaneously broke down during the exhibition. Therefore, we consider that this practice was successful.

5. Concluding Remarks

This paper aimed to share our practices for designing demonstrations of an HCI device, taking the exhibition at the 21st museum as an example. This exhibition required a system that ran stably, remotely, for a long period while providing hands-on experiences to more than several hundred people per day. We believe that the practices derived from our trials and errors are useful to readers who are considering an exhibition similar to this case.

In addition, we attempted to systematize the practices using a tool in the field of engineering design. We believe that the organized practices are more likely to provide general knowledge beyond an individual case. For example, a researcher who wants to realize an exhibition that can be operated remotely could refer to our corresponding solutions even if it is not a museum exhibition. We hope that the systematization of practices will spread to the HCI community in the future to collect useful knowledge for designing demonstrations.

Acknowledgments We are grateful to Dr. Takatoku Nishi and Anna Watanabe for the collaboration on the design of the exhibition space. We are grateful to all the staff of the 21st Century Museum of Contemporary Art, Kanazawa, especially Koichi Nakata, for their warm support. We would like to express our sincere gratitude to the Graduate Program for Social ICT Global Creative Leaders at The University of Tokyo and the DMM.make AKIBA Scholarship Program for their long-term support for the Sight project.

Reference

- [1] “SKIP CITY Sai-no-kuni Visual Plaza: what do animals see?”. 〈http://www.skipcity.jp/vm/eyes2018/〉 (accessed 2021-08-04).

- [2] Wake, N., Suzuki, R., Munakata, Y. and Fushimi, R. Sight: Sonification of affordances for the stand alone blind navigation, IEEE TENSYMP (2017).

- [3] “21st Century Museum of Modern Art Kanazawa: Sight”. 〈https://www.kanazawa21.jp/data_list.php?g=45&d=1756〉 (accessed 2021-08-04).

- [4] “Digital and Art (International symposium): Sonification of visual information for a new perspective on seeing”. 〈https://www.u-tokyo.ac.jp/focus/ja/events/e_z0109_00099.html〉 (accessed 2021-08-04).

- [5] “DCEXPO: Sight”. 〈https://www.dcexpo.jp/archives/2015/exhibition_index.html〉 (accessed 2021-08-04).

- [6] “TEPIA: Technology Expo to Unleash Human Potential”. 〈https://www.tepia.jp/exhibition/event/2016autumn02/〉 (accessed 2021-02-28).

- [7] “The University of Tokyo, III exhibition Extra: Sight”. 〈https://iiiexhibition.com/log/iiiEx2015/〉 (accessed 2021-08-04).

- [8] Nakao, M. Creative Design (in Japanese). Maruzen (2003).

- [9] “21st Century Museum of Modern Art Kanazawa: Report 2017”. 〈http://www.kanazawa21.jp/files/report_2017.pdf〉 (accessed 2021-08-04).

- [10] “VIVE”. 〈https://www.vive.com/eu/accessory/vive-tracker/〉 (accessed 2021-08-04).

- [11] “SteamVR”. 〈https://store.steampowered.com/steamvr/〉 (accessed 2021-08-04).

- [12] “Ableton Live”. 〈https://www.ableton.com/en/live/〉 (accessed 2021-08-04).

- [13] “Max for Live”. 〈https://www.ableton.com/en/live/max-for-live/〉 (accessed 2021-08-04).

- [14] “Afinia H480 3D Printer”. 〈https://afinia.com/3d-printers/h480/〉 (accessed 2021-08-04).

Naoki Wake naoki.wake@14.alumni.u-tokyo.ac.jp

Naoki Wake He received a B.S. degree in Engineering from the University of Tokyo in 2014 and a Ph.D. degree in Information Science and Technology from the University of Tokyo in 2019. He is currently with Microsoft Corp. His research interests include auditory neuroscience, neurorehabilitation, and human-computer interaction.

Ryohei Suzuki

Ryohei Suzuki He received M.Sc. degrees in Computer Science and Physics from the University of Tokyo in 2016 and 2020, respectively. He is currently working at Preferred Networks, Inc. as a research engineer. His research interests include human-computer interaction and machine learning.

Yuri Munakata

Yuri Munakata He graduated from the department of Information Design, Tama Art University in 2016. Since 2016, he has worked for Dentsu Inc. as a planner. His specialty covers designing advertising communication from various aspects.

Ryohei Fushimi

Ryohei Fushimi He received an M.Sc. degree in Interdisciplinary Information Studies from the University of Tokyo in 2017. Since 2016, he has worked for Google Japan. He is currently engaged in Google Maps iOS as a senior software engineer.

再受付日 2021年6月14日

採録日 2021年7月26日

会員登録・お問い合わせはこちら

会員種別ごとに入会方法やサービスが異なりますので、該当する会員項目を参照してください。